The below write up consists of notes that I took while watching Multi-Threaded programming module from the Advanced Operating system’s refresher course1. I watched this third module before the second module (on File Systems, the next lecture series I’ll watch) because I started tackling the homework assignment that has us students debug a buggy multi-threaded program written with the POSIX threading library.

Although I was able to fix many of the problems by writing the man pages of various system calls (e.g. pthread_join2, pthread_exit3), I wanted to back my troubleshooting skills with some theoretical knowledge, and I’m glad I did because I had forgotten that if the main thread (first thread that spawns when OS starts the process) calls exit or return, then all threads exit; this behavior can be modified by having the main thread call pthread_join instead, causing the main thread to wait for some other specific thread to terminate.

Recap

Writing multi-threaded code is difficult and requires attention to detail. Nonetheless, multi-threaded offers parallelizing work — even on a single core. Threads are cheaper in terms of context switching when compare to process context switches, since threads share the same memory space (although each thread manages its own stack, which must be cleaned up after if the thread is created as a joinable thread — detached threads, on the other hand, are cleaned up automatically when they exit) When using threads, there are a couple different design patterns: team, dispatched, pipeline. Selecting the correct design depends on the application requirements. Finally, when writing multi-threaded programming, the program must keep in mind that there are two different problems that they need to consider: mutual exclusion and synchronization. Regardless, for the program to be semantically correct, the program must exhibit: concurrency, lack of deadlocks and mutual exclusion of shared resources/memory.

Lesson 3: Multi-threaded programming

Overview

Writing correct multi-threaded code is hard, not a trivial task. Lots to consider. Need to differentiate between mutual exclusion and synchronization, two different issues)

Parallel Processing

Summary

We can launch multiple threads to execute work in parallel, each thread running on a different core. )

Asynchronous Computation

Summary

But even if there’s a single core, there’s benefit on designing multi-threaded applications, especially for IO work due to latency)

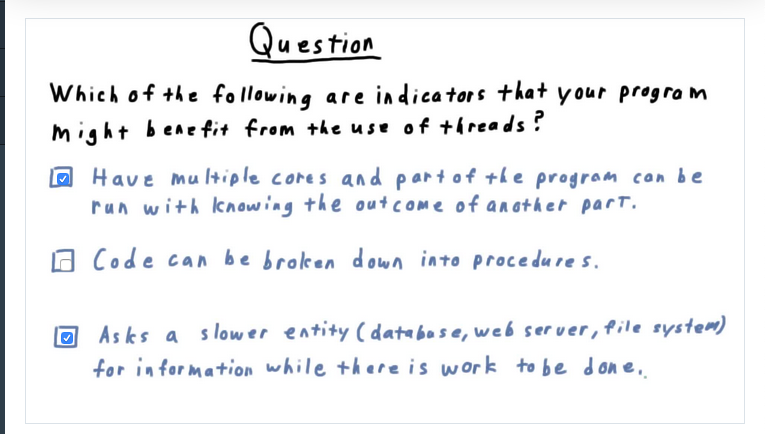

Quiz Threads

Summary

threads are beneficial when multiple cores are available and each thread can run independently. Also, when there’s a slower entity involved, like a database, web server, file system)

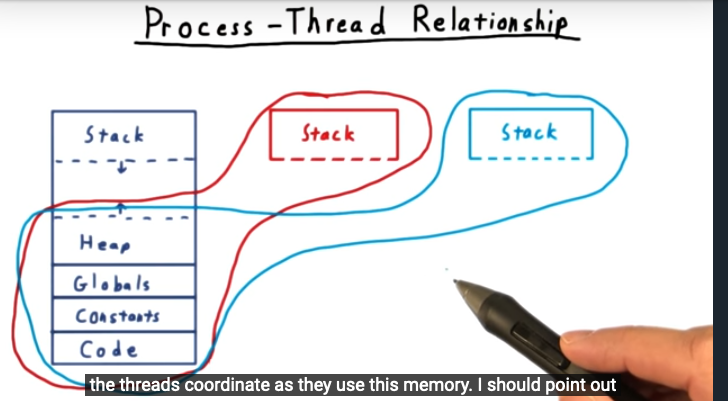

Process-Thread relationship

Summary

a thread shares the heap, global variables, constants, and code — but not the stack: each thread spawns it owns stack. In threading, we used shared memory, unlike processes or distributed systems that use message passing)

Summary:

Aced the quiz because of my prior knowledge on multi-threading, a topic I learned a lot about during graduate introduction to operating systems. Glad that I took advanced operating systems after)

Joinable and Detached Threads

Summary:

By default, POSIX creates joinable threads. But we can create them as detached, when we don’t care about their return values. Also, I learned here that there are two scenarios in which a multi-threaded program can exit: if the main thread reaches return or exit, then all threads exit as well. But in the second scenario, we’ll want the main thread to call pthread_join, allowing the main thread to wait — similar to wait_pid for process – for a specific thread to finish. This makes sense)

Joinable Threads

Summary

use pthread_join for main thread to wait for another thread to complete, the pthread_join call not returning other thread that’s passed as argument actually completes or terminates)

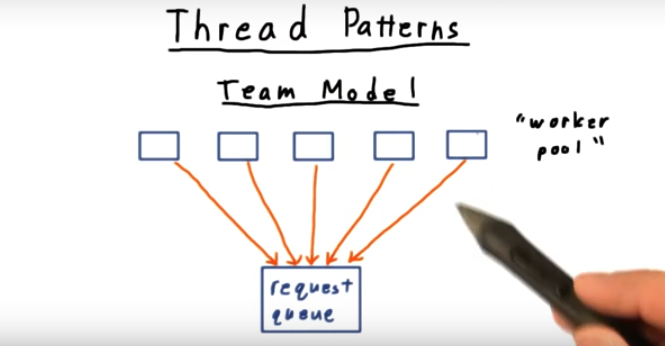

Thread Patterns

Summary

Three thread patterns are discussed: team model (each thread can dequeue work from a queue and perform activity, the threads sitting around waiting for work), dispatcher (similar to boss/worker where work is dispatched by the boss, this can be a bottle neck) and pipeline (instead of dividing work by request, each worker performs some part of the activity and then passes on the work to the next thread)

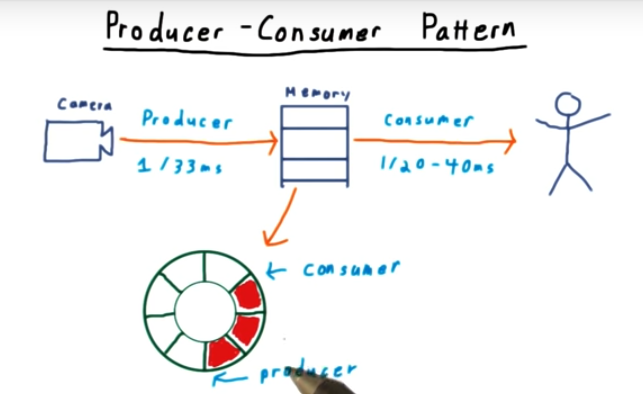

Producer-Consumer Pattern

Summary

one thread to copy frame from camera, the frame will then be consumed for analysis. To support this activity, use a ring buffer)

Quiz: What is the problem with this code

Summary

I don’t see any mutual exclusion when accessing the buffer. This is easier to point out by understanding compilers and register allocation)

Mutex Lock

(Summary:

Use mutually exclusive (i.e. mutex) to protect shared memory)

* Quiz: What is wrong with this program (Summary: Code snippet offers no concurrency but there’s no deadlock. And certainly two threads can use the same mutex: that’s the whole point)

* Quiz: What is wrong with this 2nd program (summary: I’m probably spending more time than necessary on this quiz but I really want to reason about the code. )

How to solve the problem

Summary

Get rid of the mutex around the while loop. But this is inefficient, causing spinning and what I think are spurious wake ups

Mutex vs Synchronization

Summary

Mutex and synchronization are two different problems. Mutex ensures no two threads access a shared resource at the same time. In contrast, synchronization helps us control the flow of execution between threads. For example, if we want thread 1 to perform some action before thread 2 executions some other action. I sort of remember this from operating systems course, but need a refresher. I know synchronization is a classic producer/consumer problem).

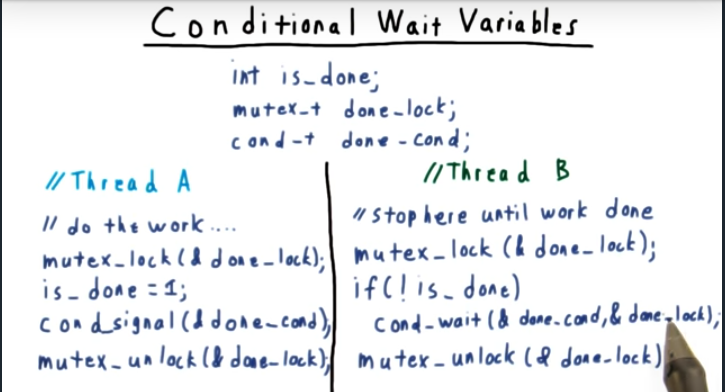

Conditional Wait Variables

Summary

To implement synchronization with conditional wait variables, we need 3 things: some global/shared variable, a mutex to protect this variable, and a conditional variable (I think semaphore))

Return to digital tracker

Summary

The conditional_wait function performs two actions. First, it takes the calling thread and places it onto a waiting queue. Second, it unlocks the mutex that’s passed in, allowing other threads to make forward progress. Also, we need to change the if predicate to a while check, since its possible for another thread to have made forward progress and an if statement would be insufficient).

Program Analysis

Summary

When writing multithreading code, need to evaluate whether program exhibits three characteristics: concurrency, absence of a dead lock, mutual. Exclusion of shared memory)

References

1 – https://classroom.udacity.com/courses/ud098

2 – https://man7.org/linux/man-pages/man3/pthread_join.3.html

3 – https://man7.org/linux/man-pages/man3/pthread_exit.3.html