How can the OS make use of a larger, slower device to transparently provide the illusion of a large virtual address space?

Overview

Why try and create a large virtual address space, a virtual address space that is larger than the physical amount of address memory installed on the system? Well, the illusion of a larger virtual address space serves as a useful abstraction for application developers: they just request more memory and simply get it — thanks to the operating system.

To this end, the OS creates a memory hierarchy. At the top sits the fastest cache (L1 or L2 or L3) and at the bottom sits hard disks (as much as 10,000 slower). And the speed of the cache is inversely proportional to the cost. That is, fast cache is expensive and slow cache is cheap.

Essentially, the OS reserves portions of the disk as swap, the location in which memory is paged in (i.e. from disk to memory) and out of (from memory to disk). How does the OS determine which pages currently live in memory and which pages live on disk? By looking at the page table entry metadata. More specifically, if the present bit is set then the physical frame lives in memory. Otherwise, it lives on disk.

In the scenario when the page lives on disk, the page handler must get invoked. With the present bit set as 0, the page handler will find the memory living in swap (the address is also stored in the metadata of the page table entry), page the memory into either a physical frame (that was available or freed up by the page replacement policy), set the present bit equal to 1, and finally re-run the instruction that generated the page fault (i.e. memory access when present bit set to 0).

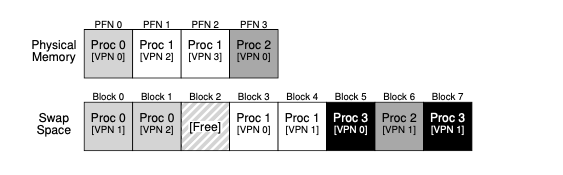

21.1 Swap Space

Summary

The swap space is orders of magnitude larger than physical memory, allowing OS to prevent a large amount of memory to the process and program developers

21.2 The Present Bit

Summary

The present bit is a flag that lets the translate lookaside buffer (TLB), hardware or OS software based, whether the physical frame number itself lives in memory or whether it has been swapped out to disk. If the bit/flag is set to 0, then we must invoke the pagefault handler. Also, the TLB locates the page table based off of the page table register but this rises another question for me: who’s responsibility it is to set the page table register. Probably the OS since the OS is responsible for this per process page table. The OS is a beast!

21.3 The Page Fault

Summary

The TLB checks the PTE and if the PTE’s present bit is set to 0, the page fault handler runs. The page fault handler will check the PTE’s metadata for the location / index of where the page table entry exists on disk (or whatever underlying swap mechanism). Then, the page fault handler will rerun the process and the TLB (hardware or software) will then update its cache. During this page fault workflow, the process is put into a blocked state so other processes can run: concurrency for the win!

21.4 What if Memory is Full

Summary

Need to invoke the page replacement policy (like an LRU)

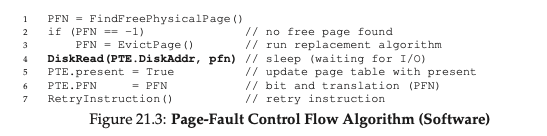

21.5 Page Fault Control Flow

Summary

When a page fault occurs, the hardware (TLB) and software (OS) must carry out a sequence of actions, depending on the scenario. Focusing on the OS, if a page fault occurs, then the OS must fetch a new physical address, populate the page frame with the right contents, update the PTE, setting the valid bit equal to 1, update the PTE’s page frame number, then finally retry the instruction.

21.6 When replacements really occur

Summary

To optimize the address space and ensure there are always pages available, the swap (or page) daemon runs in the background, freeing up memory for running processes and for the OS.