In the context of an operating system, what does structure even mean and why is it important? Structure determines how the operating system serves application in regards to the underlying hardware and how it balances the following qualities: protection, performance, flexibility, scalability, agility, and responsiveness.

To obtain the above qualities, several designs exist including monolithic and microkernel. In a monolithic OS, there’s a division between applications running in user space vs the operating system’s core services (e.g. file systems, memory, scheduling). Taking this division a step further is microkernel design, separating the core services from the kernel, the core services all running in user space. There are trade offs between each approach. Monolithic operating systems improve performance, reducing the number of system calls between user and privileged mode, a trade off that microkernel operating systems sacrifice. However, microkernel operating systems offer greater extensibilty, allowoing applications to select which OS core services cater to their needs.

Introduction

Summary

Will study structure of OS, whatever that means. To this end, we’ll learn about SPIN and Exokernel approaches, two terms I’m unfamiliar with, and then a micro kernel)

Quiz: OS System Services

Summary

I got most of the services, apart from inter process communication (IPC) and thread scheduling, I named the others including access to I/O devices, access to network, memory management (protection, sharing, demand paging)

OS Structure

Summary

Structure determines how OS serves applications and the underlying hardware

Goals of OS Structure

Summary

There are six goals of an OS: protection (users themselves and between users, and of course the OS), performance (the time taken for OS to carry out its service), Flexibility (not one size fits all and should be able to adapt to different applications), Scalability (essentially if hardware improves, so should the speed of the operating system), Agility (how quickly can the OS respond to changing environment), responsiveness (does OS react to events, liken I/O, quickly)

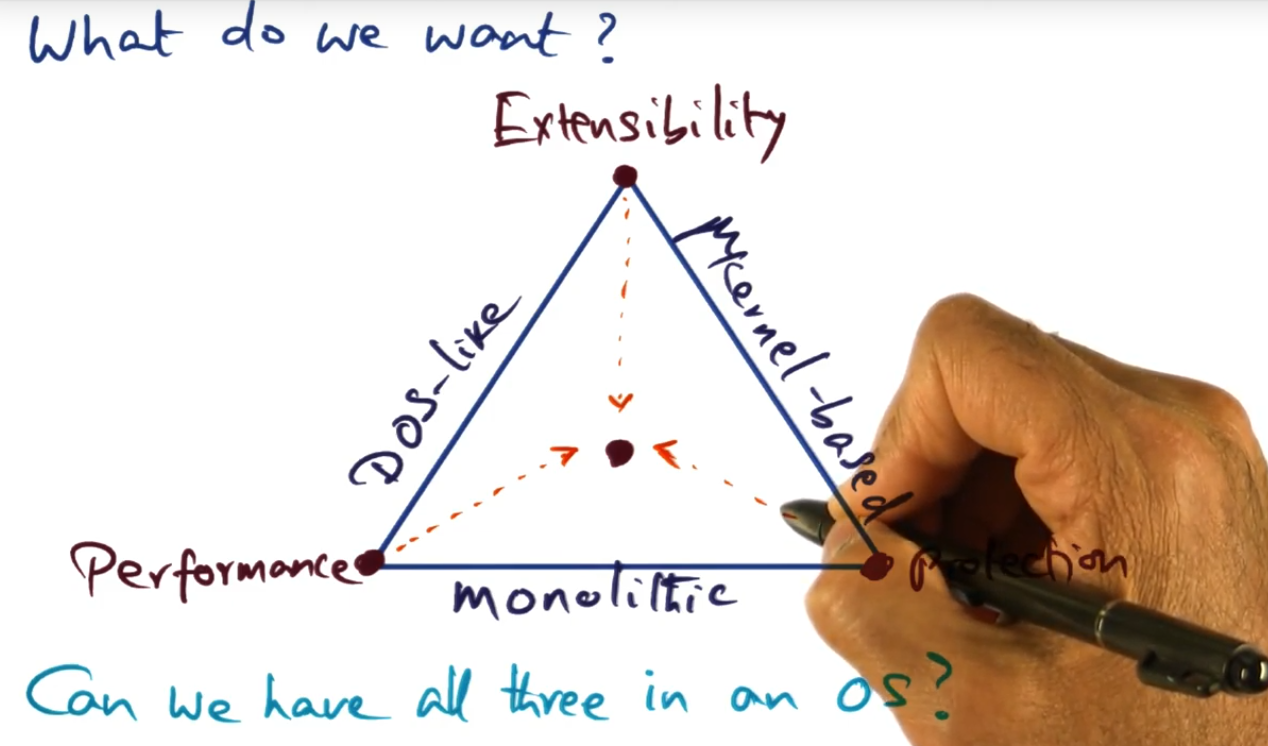

Commercial OS

Summary

Apparently I’ll learn that somehow, system designers can have their cake and eat it too. That is, they do can achieve both performance without really sacrificing protection and flexibility. How? Who knows, though I should find out soon

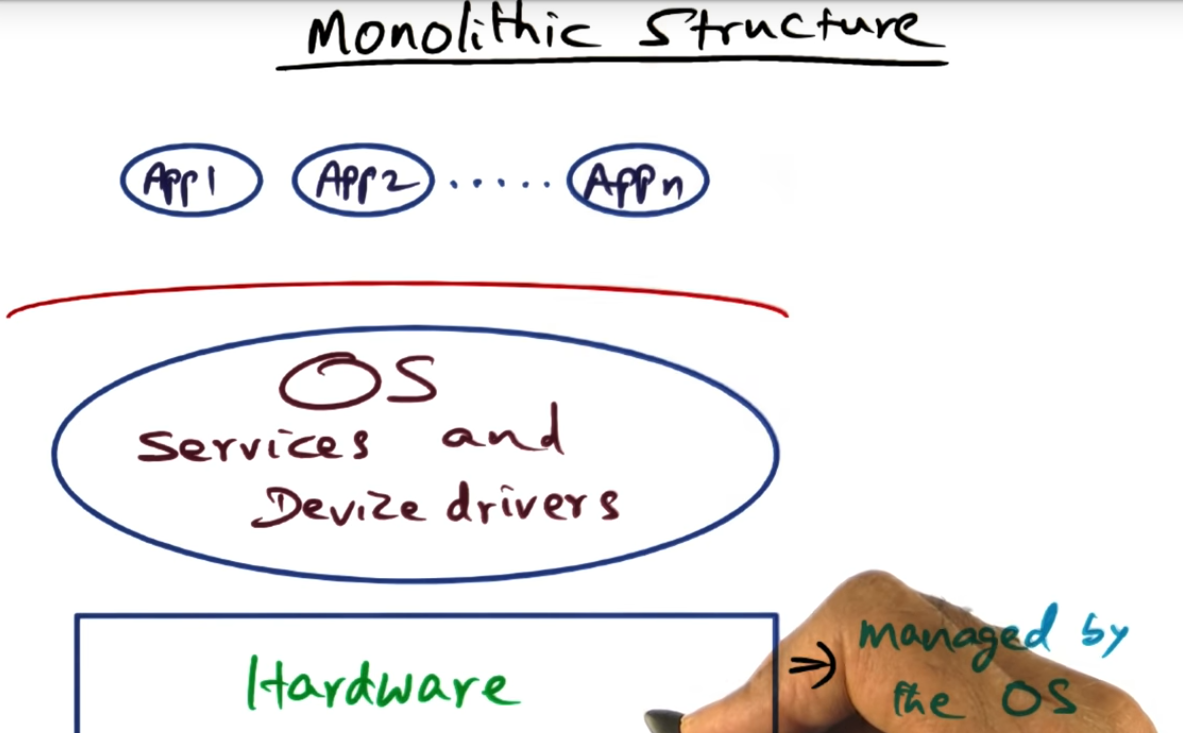

Monolithic Structure

Summary

Why do we call it a monolithic structure? Because the big blob (see photo) contains all the services (e.g. file system, network access, memory management, scheduling) offered by the operating system

DOSlike Structure

Summary

At a glance, the DOS like structure seems indistinguishable from monolithic. But how is it different?

Quiz DOSlike Structure Pros and Cons

Summary

Seems like DOS allows applications to run in the same space as the OS, so no trapping is necessary: just a procedure call. Trade off here is lack of protection; the OS can get corrupted by a running application.

DOSlike Structure (continued)

Summary

DOSlike structure and its lack of protection is unacceptable for general purpose OS. On the other hand, monolitic structure reduces performance, while offering protection. But we need to also consider how we can customize and tailor needs of different applications — via customization

Opportunities for customization

Summary

Learned a little more about why customization might be important. I thought that page fault handling should be the same for every process but there’s a missed opportunity here. Since the OS sort of bets on processes not requiring the entire address space, this bet might be expensive if the page fault handler (along with the eviction algorithm) runs unnecessarily. SO perhaps OS can learn more about the process and inform the cache replacement policy

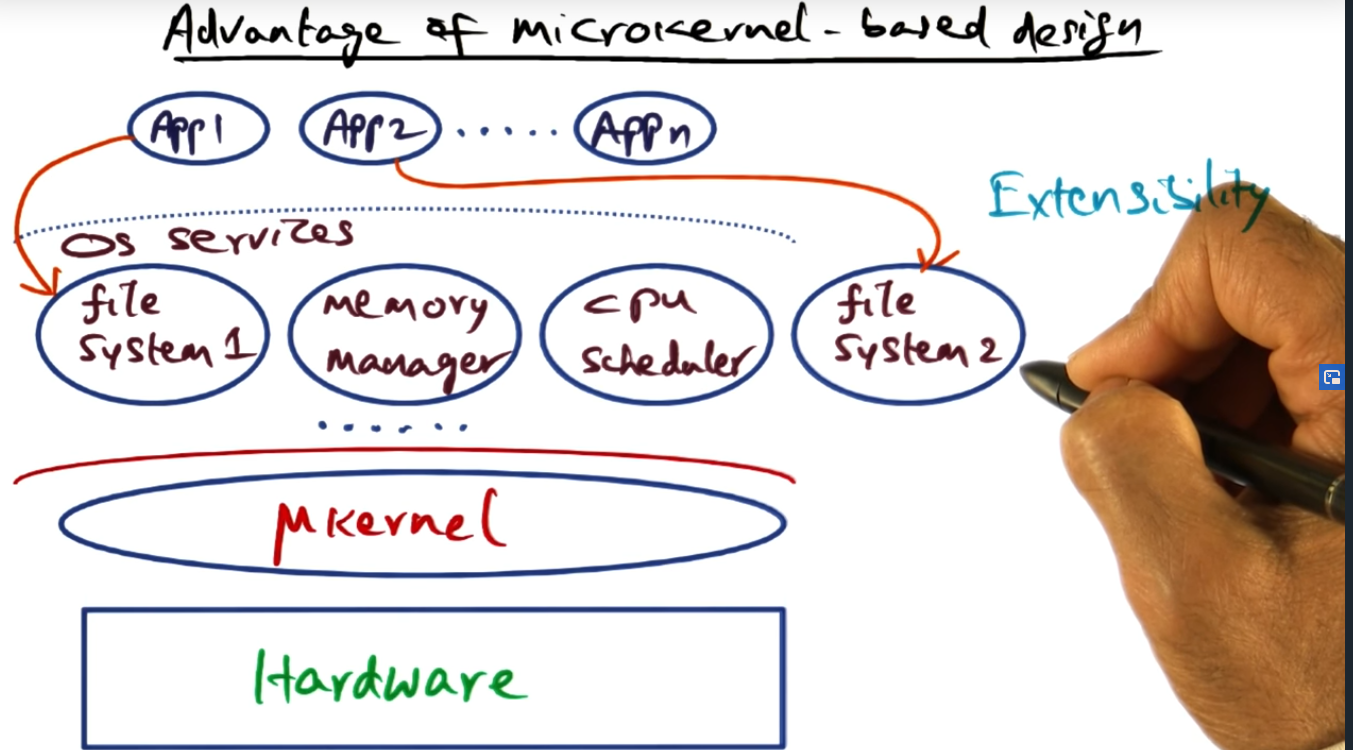

Microkernel based OS Structure

Summary

Why the hell do we want to overcomplicate the OS design by introducing a microkernel based OS structure? What’s the gain here? Extensibility! Previously, application 1 and application 2 would access the file system or the memory manager or the scheduler. There’s lack of extensibility here. Also, each of those servers (e.g. file system, memory management, cpu scheduler) also run in user space and will still require some sort of IPC to talk to one another, requiring the servers to talk to the micro kernel via system calls. I’ll soon find out but I think this is expensive; that is, we are sacrificing performance, I think. Will find out shortly in next lesson

Downside to the Mikrokernel

Summary

Biggest down side with microkernel approach is potential performance hit. For application to talk to file system (or other servers), a lot of IPC is needed. Data flowing between services require IPC calls in order to Marshall data in and out between applications and servers, and between servers as well

Why performance loss

Summary

Memory locality changes since we need to context switch, dramatically impeding performance. Same goes with copying data between user space and system space. This strategy is unlike monolithic, which the servers can share data easily with one another, although a little more inflexible.

What do we want?

Summary

Is it possible to get the OS to exhibit all three desirable characteristics: performance, extensibility, and protection — at the same time? Apparently, there is, but I’ll have to wait until the next module